They Called Me the Google Queen. Now I'm Doing It Again.

When Google Workspace started making its way into Alberta classrooms, I was excited. Not cautiously interested — genuinely excited. I went to a Google professional development session in Kelowna, came back, and started moving fast. Spreadsheets for tracking student progress. Shared folders for resources. Calendars, hyperlinks, collaborative presentations. I was integrating it everywhere I could see a use for it.

Some colleagues couldn't keep up. A few got frustrated. They weren't wrong to feel that way — I was moving at a pace that left people behind, and that creates its own problems. But they started calling me the Google Queen, and I wore it without apology. Because I genuinely believed — and still do — that getting ahead of a technology shift, understanding it from the inside, is part of what it means to prepare students for the world they're actually entering.

That was over a decade ago. I'm watching the same dynamic play out now with AI. Some staff members are completely opposed to it. Some students are too. And I find myself, again, moving faster than the people around me are comfortable with.

Recently, while reading about resistance to technology in education, I came across a concept that stopped me: the Sisyphean cycle of technology panics. I had been seeing the pattern for years. I just didn't know it had a name. And when I read the research, I recognized something else too — a version of that cycle I had watched play out not in a staffroom, but in my own family.

Where I come from

My father is Mennonite. I grew up understanding, at close range, what it looks like when a community makes a deliberate choice to keep technology at arm's length — not out of ignorance, but out of a genuine conviction that some technologies threaten the things that matter most: community, faith, and a way of life built on relationship rather than convenience.

Research on Old Order Mennonite communities makes this distinction carefully: the avoidance of technology is not rooted in a belief that technology is evil, but in concern for the nature of community itself — a technology is set aside when it is judged likely to damage the bonds that hold people together.[6] I respect that logic. It comes from a place of deep intentionality about what kind of life a community wants to protect.

But I also watched what that avoidance costs. Farm equipment that could have reduced backbreaking labour, left unused. Internet and phones that could have connected isolated families to services and opportunities declined. And the particular difficulty — one I recognize in my own mother — of navigating a world that has moved forward while you stayed still. Trying to walk her through a Google Meet, watching her struggle with something that feels second-nature to me, I feel the gap that avoidance creates over time. Not a moral gap. A practical one. A real one.

"I come from people who made a principled choice to step back from technology. I made a different choice. Both decisions have consequences — and I have watched both play out up close."

Chosen ignorance and the fear of contamination

There is something else I grew up close to that I have been thinking about more lately, as I listen to the strongest objections to AI in my school.

In some Mennonite communities, there was a practice — not universal, but there — of deliberate illiteracy. Some community members chose not to learn to read, including not to read scripture. The reasoning was specific: if you did not know what the Bible said, you could not be judged by God for failing to live by it. Ignorance, in that logic, was not a failure. It was a shield.

I think about that when I hear colleagues say they don't want to know how AI works. That they'd rather not engage with it at all. That not knowing protects them somehow — from complicity, from compromise, from having to make difficult decisions about something they don't fully understand. I recognize that logic. I grew up near it. And I know what it costs over time.

The other pattern I grew up alongside was isolation — the deliberate withdrawal from broader society not simply to be self-sufficient, but to remain uncontaminated. The fear is specific: that exposure to outside ways of thinking will corrupt the community from within. That if you allow foreign ideas close enough, you will eventually become something you no longer recognize. Something impure.

I hear echoes of that in the way some people talk about AI. That it is soulless, that engaging with it means participating in something spiritually compromised. That there is a danger of contamination — of your thinking, your judgment, your authenticity — simply through contact. Some will frame it in explicitly moral terms: AI as the beast, engagement as selling your soul, resistance as the only way to stay pure.

I don't say this to mock those fears. I come from people who held versions of them sincerely, and whose lives were shaped by them in ways I respect even when I disagree. The desire to stay pure — of thought, of community, of self — is not a foolish desire. It comes from caring deeply about something real.

There is one more pattern worth talking about, because I see it operating in spaces more than people admit. In the communities I am describing, association carries moral weight. If you spend time with someone who practices something the community disapproves of, you are not seen as neutral. You are seen as endorsing it. Your judgment is called into question. The mechanism is not argument — it is social pressure. And it is extraordinarily effective at keeping people from engaging with things they might otherwise approach with curiosity.

I have watched artists and colleagues distance themselves from AI, because they are afraid of what their peers and collectors will think of them for engaging. I understand that fear. I have felt versions of it. But I have also watched it used to shut down exactly the kind of inquiry that teachers are professionally obligated to pursue.

Art has never been safe. Possessing knowledge has never been safe. Every tool that expanded what humans could do or think or make was, at some point, the thing that careful, well-meaning people warned each other away from. The printing press. Oil paint. The camera. The internet. Google Docs, in a staffroom in Alberta, not that long ago.

"I would rather be judged for knowing than protected by not knowing."

The Sisyphean cycle — and why it matters

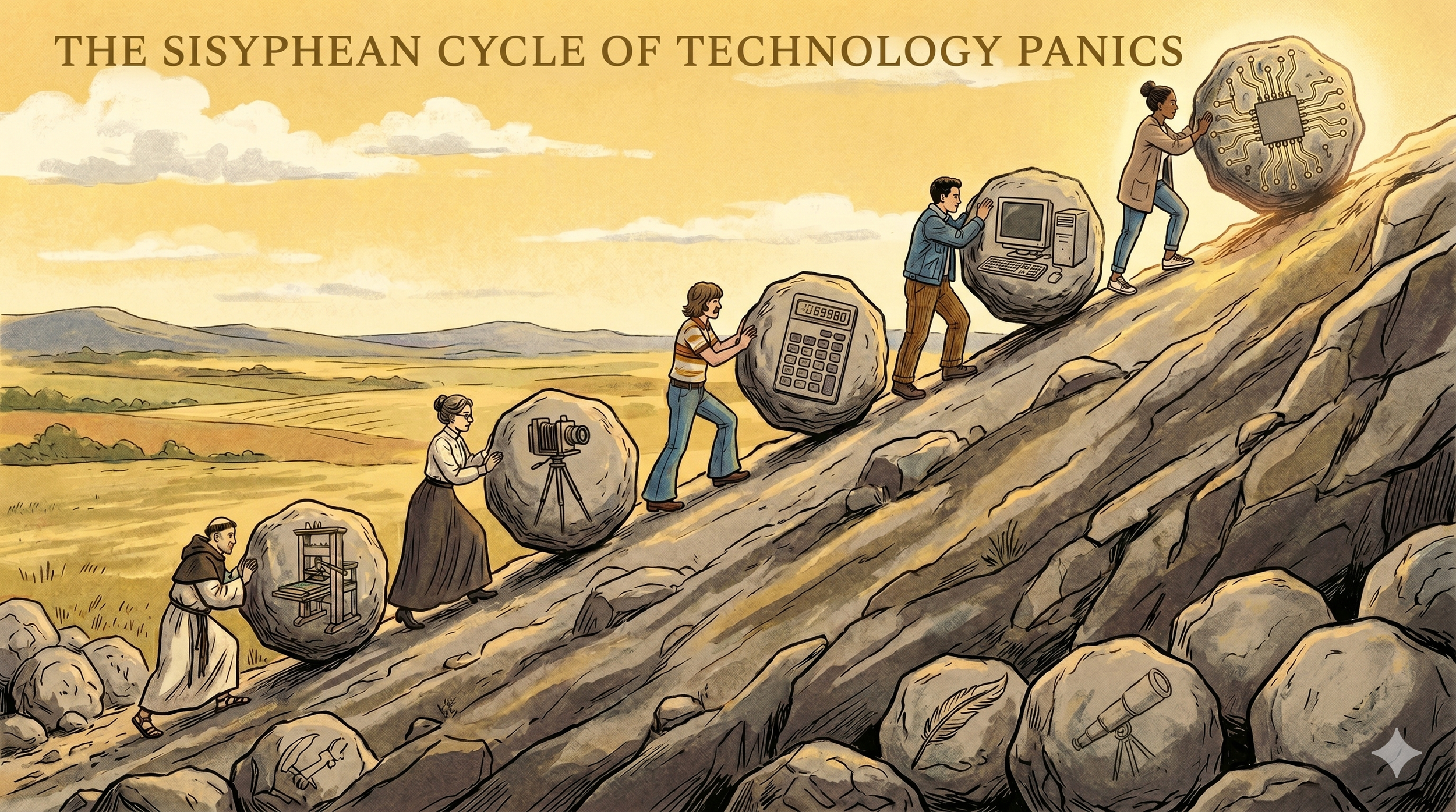

Researchers who study public reactions to new technology describe a recurring pattern: a new tool appears, people frame it as a threat to a valued human skill or way of life, institutions debate and restrict, and then — gradually — society adapts, redefines what counts as skill, and moves on. Until the next wave arrives and the cycle begins again.[1]

It's called Sisyphean because the boulder never stays at the top. Each generation of educators and artists faces a version of the same anxiety, largely unaware that the generation before them faced it too.

The examples go back further than most people expect. In the fourth century BCE, Socrates argued — through Plato's Phaedrus — that writing would weaken memory and produce only the appearance of wisdom without real understanding. Monks resisted the printing press. Painters declared photography the end of their craft. Math teachers fought calculators. Schools spent years debating whether to allow students online at all.[1][5] And some communities, like the one my father grew up in, made the cycle's resistance stage into a permanent way of life.

4th century BCE

Writing — ancient Greece Will weaken memory; create the appearance of wisdom without real understanding

15th–16th century

Printing press — Renaissance Europe Book abundance will weaken serious study; knowledge stored outside the mind

19th century

Photography — Europe and North America Will make painting obsolete; no market for human skill in representation

1970s onward

Calculators — schools worldwide Students will lose mental arithmetic; reasoning skills will atrophy

1990s–2000s

Internet — classrooms Distraction, plagiarism, misinformation; will replace real research

2010s — my classroom

Google Workspace — Alberta schools Search will replace thinking; collaboration tools will enable copying

Now — my classroom

AI — everywhere, all at once Cheating, dependence, weakened writing, eroded originality

What struck me when I first read about this cycle wasn't the historical examples. It was recognizing that I had personally lived through two entries on that list — on the early-adopter side of both. And that the communities I grew up near were living proof that the resistance stage doesn't have to be temporary. For some, it becomes the destination.

It's already in my job description

Here's what grounds me when the debate gets loud: I don't have to resolve the cultural argument to know what I'm professionally required to do. Alberta's Teaching Quality Standard is clear. Competency 2 requires every teacher to maintain awareness of emerging technologies to enhance knowledge and inform practice.[2] Not when the consensus has settled. Not when the policy is comfortable. Throughout my career.

Competency 3 goes further:

Alberta Teaching Quality Standard · Competency 3 [2]

Teachers must incorporate digital technology to build student capacity for: acquiring, applying and creating new knowledge; communicating and collaborating with others; critical thinking; and accessing, interpreting and evaluating information from diverse sources.

That list is not a description of what AI can do for students. It's a description of what I am required to develop in them. And it maps directly onto what deliberate, reflective AI use actually builds — when it's taught with intention rather than simply permitted or simply banned.[3]

What AI literacy actually looks like

The assumption worth pushing back on is that AI is simply a shortcut — that students who use it are skipping thinking rather than doing it. Research on technology adoption consistently shows the same pattern: the tool itself is rarely the deciding factor. How deliberately it is introduced and structured is what determines whether it deepens or displaces thinking.[4]

Used with intention, AI develops a specific and learnable set of capacities: [1][3]

Task framing

Translating a vague goal into a precise, useful prompt

Output evaluation

Knowing when a result is good, almost right, or subtly wrong

Critical reading

Checking AI-generated claims before accepting them

Iterative thinking

Refining ideas through rapid cycles rather than single drafts

Judgment under ambiguity

Deciding what AI should touch and what it shouldn't

Synthesis

Combining AI output with personal knowledge and context

None of those develop automatically. They develop the way any skill does — through structured practice, with feedback, over time.[5] Which is exactly what teachers are trained to design. The resistance I'm seeing from colleagues and students doesn't change what the skill requires. It changes how carefully I need to introduce it.

Why I can't afford to be afraid of this

I think about my mother when I hear colleagues say they'll wait until things settle down. I understand the impulse completely. But I've seen, up close, what it looks like to be left behind by technology you chose not to learn — and I know how hard it is to close that gap once it has grown wide enough.

My boys are growing up in a world where AI is already part of the landscape. My students are entering one. If I'm afraid of it, I'm not just failing to learn it myself — I'm failing to prepare the people who are counting on me. I come from people who made a principled, intentional choice to step back from the world in order to protect what they valued. I respect that choice even as I made a different one. But I'm a teacher. My students don't get to opt out of the world they're entering. Which means I don't either.[4]

"The Sisyphean cycle keeps moving whether or not we engage with it. The difference is whether our students reach the adaptation stage with someone guiding them — or entirely on their own."

What I'm actually doing about it

I'm still working this out, and I want to be honest about that. The shift that has helped most is moving away from "did you use AI for this?" toward something more productive: "show me how your thinking changed as you worked with it." When students have to articulate what they prompted, what they received, what they changed and why — the assessment becomes about judgment, not output. That judgment is something I can teach, scaffold, and evaluate directly against what the TQS already requires of me.[2]

When I started integrating Google, I moved fast and learned by doing. Some of what I tried didn't work. I adjusted. I got better at knowing which tool belonged where and why. I expect AI to look the same — messier and slower than I'd like, more iterative than I'd prefer, and more necessary than the debate around it sometimes suggests.

I've been the person moving too fast before. I've also seen what it costs when people fall too far behind — and what it costs the students in their care. I know which side of that I want to be on.

Art has never been safe. Knowledge has never been safe. I would rather be judged for knowing than protected by not knowing.

What are other Alberta educators navigating? I'm genuinely curious what resistance looks like in your school — and what's starting to work.

References

[1]The Sisyphean cycle of technology panics — journals.sagepub.com; km4s.ca

[2]Alberta Education. (2018). Teaching Quality Standard. — open.alberta.ca

[3]The futility of resisting AI in education — lsst.ac

[4]Views of AI's impact on society. Pew Research Center (2025) — pewresearch.org

[5]A short history of educational technology. Tony Bates (2014) — tonybates.ca

[6]Old Order Mennonite — Wikipedia; Turner, K. — Old Colony Mennonites and Digital Technology — ideaexchange.uakron.edu

#AlbertaEducation · #TeachingQualityStandard · #AIinEducation · #AILiteracy · #HighSchoolTeacher · #SisypheanCycle · #TechnologyPanic · #GoogleForEducation · #EdTech · #FutureOfLearning · #ProfessionalLearning · #CriticalThinking · #TeacherPD